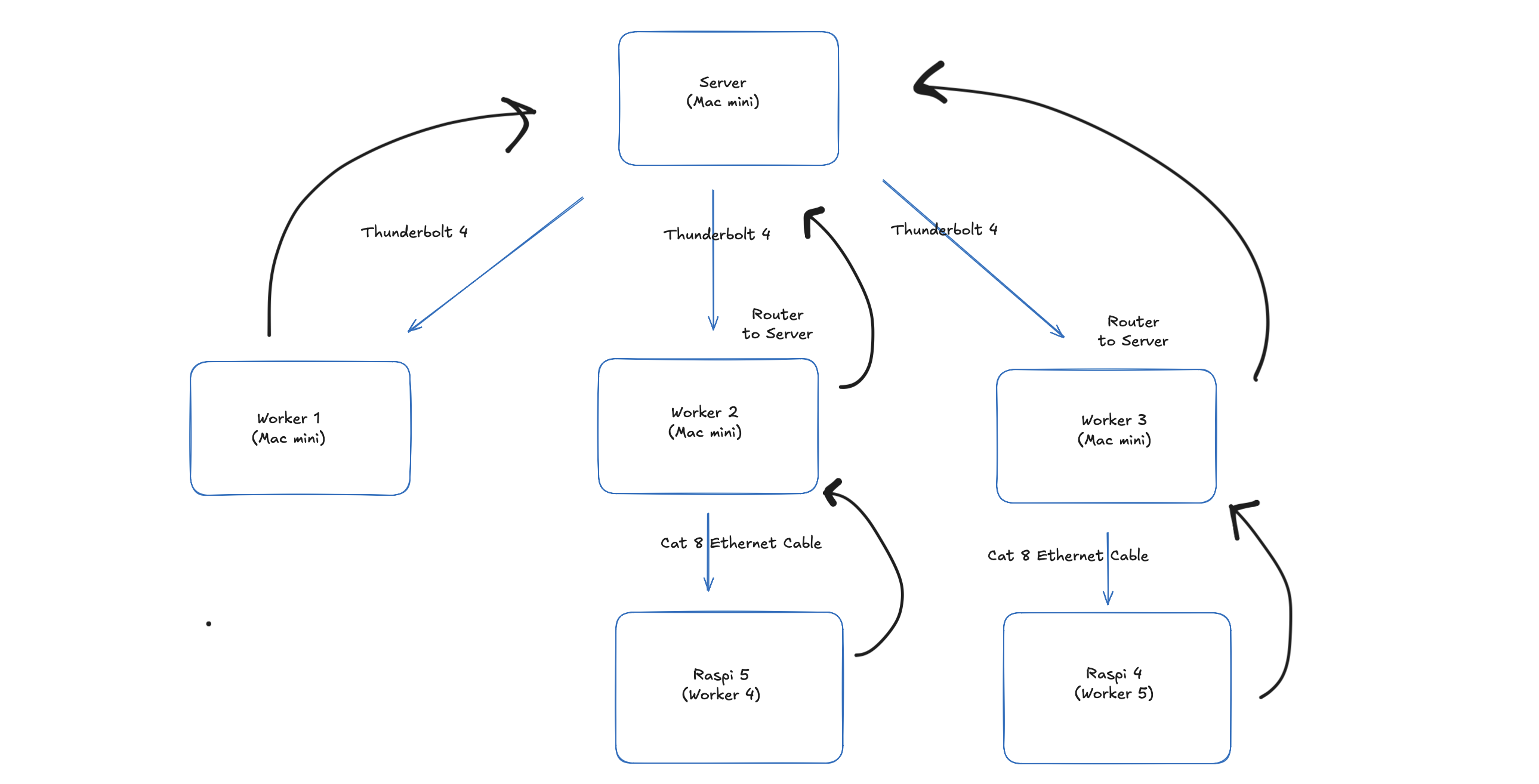

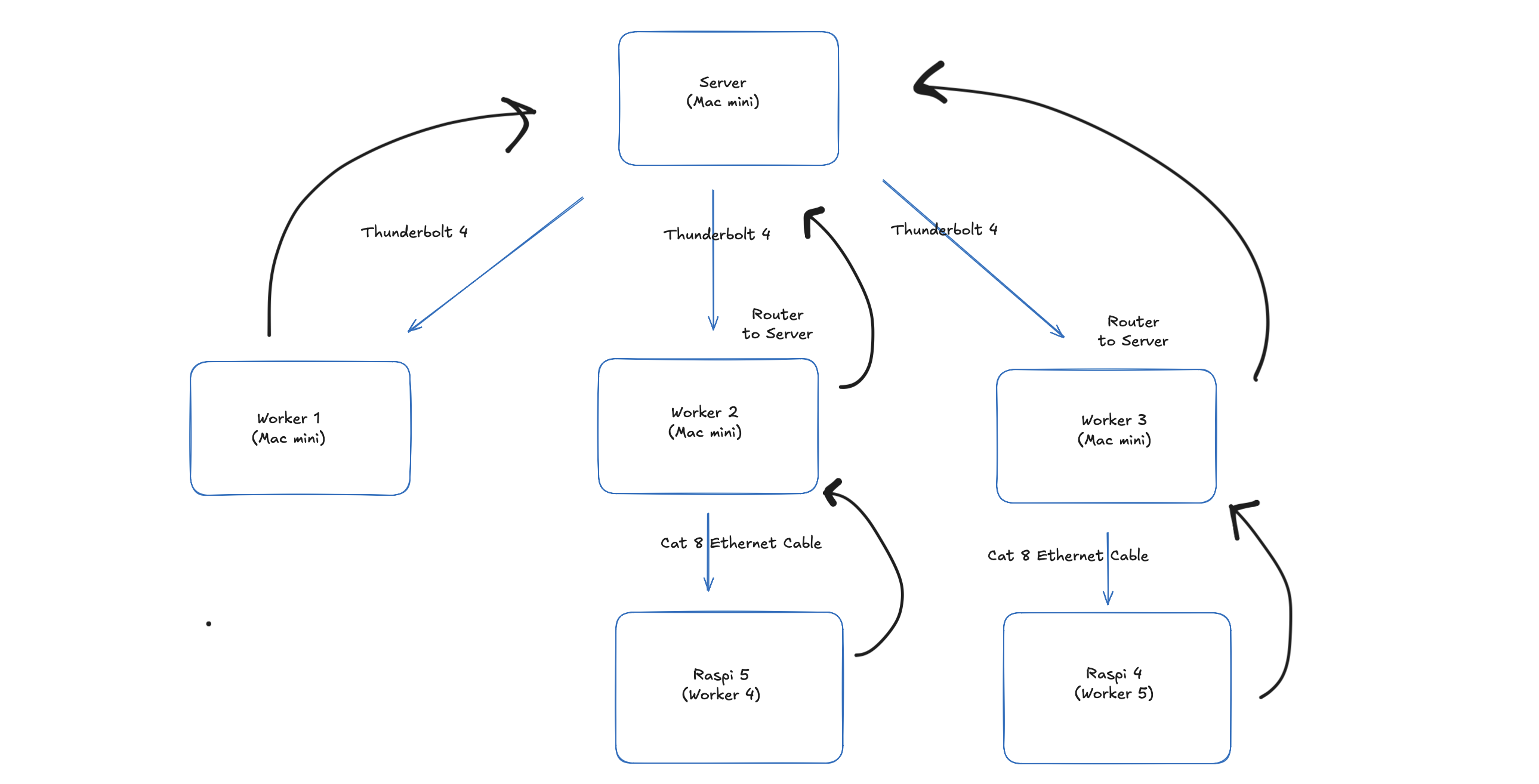

Cluster Architecture

Distributed Deep Learning Library for Heterogeneous Hardware

Training and inference for neural networks across heterogeneous hardware with PyTorch — Mac minis, Raspberry Pis, MacBooks, and Windows machines using only Python sockets.

smolcluster is a distributed deep learning library designed for training neural networks across heterogeneous hardware using PyTorch and socket-based communication. It enables researchers and developers to leverage multiple machines with different capabilities for distributed training and inference.

The library supports various distributed training algorithms including Fully Sharded Data Parallelism (FSDP), Classic Data Parallelism (ClassicDP), Elastic Distributed Parallelism (EDP), Model Parallelism, Model Parallelism with Pipeline, and Expert Parallelism. It runs on diverse hardware including Mac minis, Raspberry Pis, MacBooks, and Windows machines.

Step-by-step setup guide for Mac Mini (Thunderbolt) and Jetson / home router clusters, with commands ready to copy.

git clone https://github.com/YuvrajSingh-mist/smolcluster.git

cd smolcluster

uv sync

# launch training

bash scripts/launch_edp_train_gpt.sh

# launch inference

bash scripts/inference/launch_mp_inference.sh

bash scripts/inference/launch_api.shgrove start <script> -n N on the coordinator

and grove join on each worker.

Train and run inference across heterogeneous hardware including Mac minis, Raspberry Pis, MacBooks, Windows machines, and iPad clients.

Built-in support via the Hugging Face Transformers library for Data Parallelism (DP). For Model Parallelism (MP), GPT-2 (117M) is currently supported, with support for additional models coming soon.

smolcluster implements a distributed training system designed from the ground up for heterogeneous hardware. The library supports multiple distributed training paradigms, each optimized for different cluster configurations and network topologies.

Comprehensive guides to help you get the most out of smolcluster:

smolcluster is released under the MIT License.

Contributions are welcome! Visit the GitHub repository to get involved.