Note: Smolcluster requires a distributed hardware setup and network configuration before you can begin training. This is not a straightforward installation.

Prerequisites

Before using Smolcluster, you need to set up your distributed cluster:

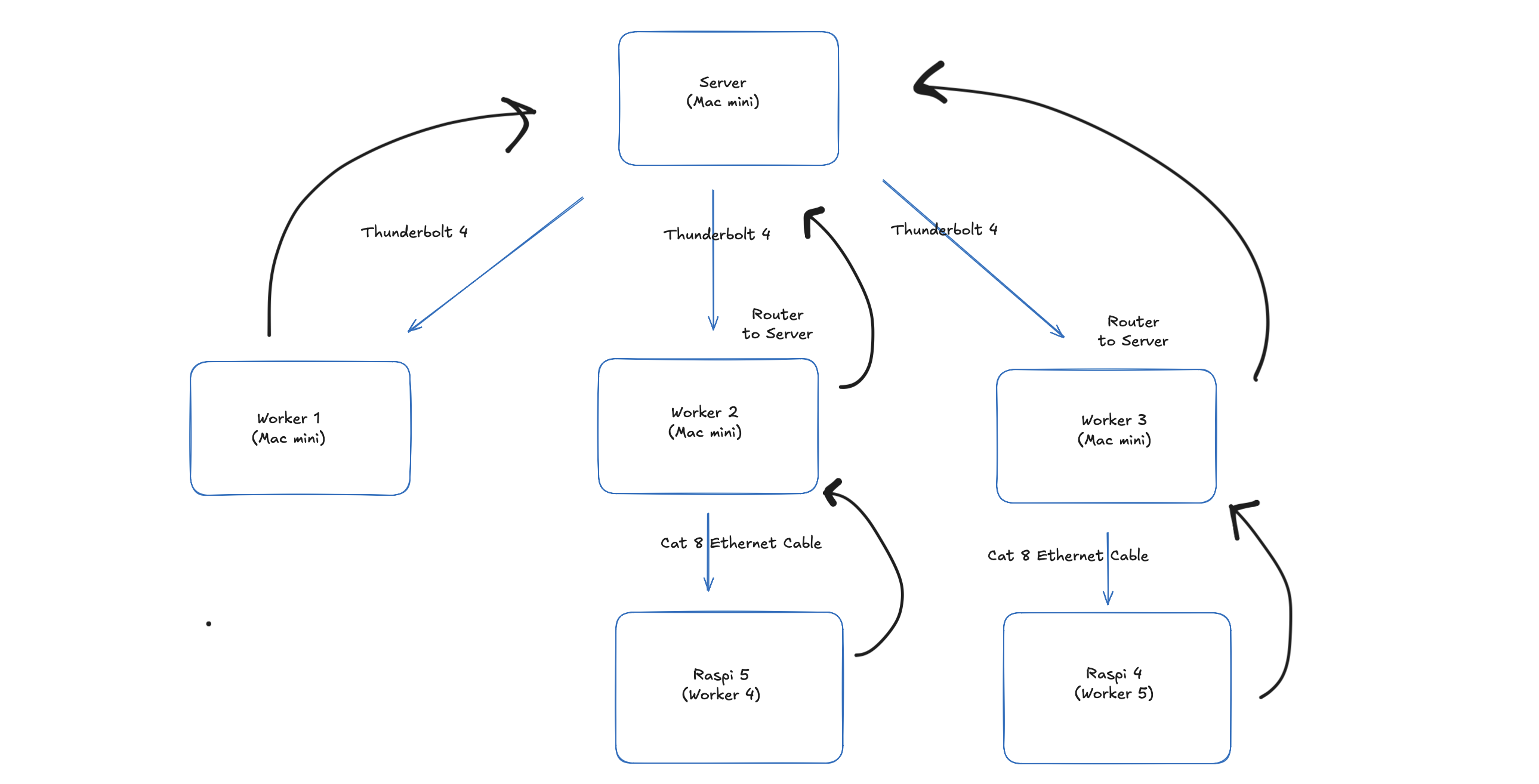

- Hardware Setup: Configure your machines (Mac minis, Raspberry Pis, GPUs, etc.)

- Network Configuration:

- Mac minis: Thunderbolt connections and network bridges

- Raspberry Pi/GPUs: Ethernet connections

- SSH setup with proper gateways and key authentication

- Cluster Configuration: YAML configuration files for your specific topology

Installation

Once your cluster is properly configured:

# Install uv package manager

curl -LsSf https://astral.sh/uv/install.sh | sh

# Clone and install

git clone https://github.com/YuvrajSingh-mist/smolcluster.git

cd smolcluster

uv sync

Important: Please refer to the Cluster Setup Guide for detailed hardware setup, networking configuration, and troubleshooting before attempting to run training scripts.